¶ 1 Overview

This guide describes how to configure and use the Nuance Bot Service (BS) plugin to the UniMRCP server. The document is intended for users having a certain knowledge of Nuance Mix DLGaaS API and UniMRCP.

¶ 1.1 Installation

For installation of the packages, use one of the manuals below.

¶ 1.2 Applicable Versions

Instructions provided in this guide are applicable to the following versions.

UniMRCP 1.7.0 and above

UniMRCP Nuance BS Plugin 1.0.0 and above

¶ 2 Supported Features

This is a brief check list of the features currently supported by the UniMRCP server running with the Nuance BS plugin.

¶ 2.1 MRCP Methods

-

DEFINE-GRAMMAR

-

RECOGNIZE

-

START-INPUT-TIMERS

-

STOP

-

SET-PARAMS

-

GET-PARAMS

¶ 2.2 MRCP Events

-

RECOGNITION-COMPLETE

-

START-OF-INPUT

¶ 2.3 MRCP Header Fields

-

Input-Type

-

No-Input-Timeout

-

Recognition-Timeout

-

Speech-Complete-Timeout

-

Speech-Incomplete-Timeout

-

Waveform-URI

-

Media-Type

-

Completion-Cause

-

Confidence-Threshold

-

Start-Input-Timers

-

DTMF-Interdigit-Timeout

-

DTMF-Term-Timeout

-

DTMF-Term-Char

-

Save-Waveform

-

Speech-Language

-

Cancel-If-Queue

-

Sensitivity-Level

¶ 2.4 Grammars

-

Built-in and dynamic speech contexts

-

Built-in/embedded DTMF grammar

-

SRGS XML (limited support)

¶ 2.5 Results

- NLSML

¶ 3 Configuration Format

The configuration file of the Nuance BS plugin is located in /opt/unimrcp/conf/umsnuancebs.xml. The configuration file is written in XML.

¶ 3.1 Document

The root element of the XML document must be <umsnuancebs>.

Attributes

| Name | Unit | Description |

|---|---|---|

| license-file | File path | Specifies the license file. File name may include patterns containing '*' sign. If multiple files match the pattern, the most recent one gets used. |

| subscription-key-file | File path | Specifies the Nuance Mix credentials to use. File name may include patterns containing '*' sign. If multiple files match the pattern, the most recent one gets used. |

Parent

- None.

Children

| Name | Unit | Description |

|---|---|---|

| streaming-recognition | String | Specifies parameters of streaming recognition method employed via gRPC. |

| results | String | Specifies parameters of recognition results set in RECOGNITION-COMPLETE events. |

| speech-dtmf-input-detector | String | Specifies parameters of the speech and DTMF input detector. |

| utterance-manager | String | Specifies parameters of the utterance manager. |

| rdr-manager | String | Specifies parameters of the Recognition Details Record (RDR) manager. |

| monitoring-agent | String | Specifies parameters of the monitoring manager. |

| license-server | String | Specifies parameters used to connect to the license server. The use of the license server is optional. |

Example

This is an example of a bare document.

<umsnuancebs license-file="umsnuancebs_*.lic" subscription-key-file="nuance.subscription.key">

</umsnuancebs>

¶ 3.2 Streaming Recognition

This element specifies parameters of streaming recognition.

Attributes

| Name | Unit | Description |

|---|---|---|

| language | String | Specifies the default language to use, if not set by the client. |

| start-of-input | String | Specifies the source of start of input event sent to the client (use "service-originated" for an event originated based on a first-received interim result and "internal" for an event determined by plugin). |

| max-alternatives | Integer | Specifies the maximum number of speech recognition result alternatives to be returned. Can be overridden by client by means of the header field N-Best-List-Length. |

| alternatives-below-threshold | Boolean | Specifies whether to return speech recognition result alternatives with the confidence score below the confidence threshold. |

| single-utterance | Boolean | Specifies whether to detect a single spoken utterance or perform continuous recognition. |

| skip-unsupported-grammars | Boolean | Specifies whether to skip or raise an error while referencing a malformed or not supported grammar. |

| skip-empty-results | Boolean | Specifies whether to implicitly initiate a new gRPC request if the current one completes with an empty result. |

| transcription-grammar | String | Specifies the name of the built-in speech transcription grammar. The grammar can be referenced as builtin:speech/transcribe or builtin:grammar/transcribe, where transcribe is the default value of this parameter. |

| grammar-param-separator | String | specifies a separator of optional parameters passed to a built-in grammar. The separator defaults to ';'. |

| http-proxy | String | Specifies the URI of HTTP proxy, if used. |

| stream-creation-timeout | Time interval [msec] | Specifies how long to wait for gRPC stream creation. If timeout is set 0, no timer is used. Otherwise, if timeout is elapsed, gRPC stream creation is cancelled. |

| grpc-log-redirection | Boolean | Specifies whether to enable gRPC log redirection. |

| grpc-log-verbosity | String | Specifies gRPC logging verbosity. One of DEBUG, INFO, ERROR. See GRPC_VERBOSITY for more info. |

| grpc-log-trace | String | Specifies a comma separated list of tracers producing gRPC logs. Use 'all' to turn all tracers on. See GRPC_TRACE for more info. |

| inter-result-timeout | Time interval [msec] | Specifies a timeout between interim results containing transcribed speech. If the timeout is elapsed, input is considered complete. The timeout defaults to 0 (disabled). |

| auth-validation-period | Time interval [sec] | Specifies a period in seconds used to re-validate access token. |

| auth-request-timeout | Time interval [sec] | Specifies a timeout in seconds set on HTTP requests placed to re-validate access token. |

| selector-channel | String | Specifies the selector channel used in the Start request. Can be overridden by client. |

| selector-library | String | Specifies the selector library used in the Start request. Can be overridden by client. |

| session-timeout | Time interval [sec] | Specifies the session timeout used in the Start request. Can be overridden by client. |

| model-uri | String | Specifies the model URI used in the Start request. Can be overridden by client. |

| model-type | String | Specifies the model type used in the Start request. Can be overridden by client. |

| speech-domain | String | Specifies the speech_domain field in RecognitionParameters. Can be overridden by client. |

| formatting-scheme | String | Specifies the formatting scheme field in RecognitionParameters. Can be overridden by client. |

| auto-punctuate | Boolean | Specifies whether to enable automatic punctuation. Can be overridden by client. |

| filter-profanity | Boolean | Specifies whether to filter profanities in recognition result. Can be overridden by client. |

| include-tokenization | Boolean | Specifies whether to include tokenized recognition result. Can be overridden by client. |

| discard-speaker-adaptation | Boolean | Specifies whether to discard updated speaker data, if speaker profiles are used. By default, data is stored. Can be overridden by client. |

| suppress-call-recording | Boolean | Specifies whether to redact transcription results in the call logs and disable audio capture. Can be overridden by client. |

| mask-load-failures | Boolean | Specifies whether errors loading external resources shall not terminate recognition. Can be overridden by client. |

| suppress-initial-capitalization | Boolean | Specifies whether to suppress automatic capitalization of the first word in a sentence. Can be overridden by client. |

| allow-zero-base-lm-weight | Boolean | Specifies whether custom resources (DLMs, wordsets, and others) can use the entire weight space. Can be overridden by client. |

| filter-wakeup-word | Boolean | Specifies whether to remove the wakeup word from the final result. Can be overridden by client. |

| end-stream-no-valid-hypotheses | Boolean | Determines whether the dialog application or the client application handles the dialog flow when ASRaaS does not return a valid hypothesis. Can be overridden by client. |

Parent

<umsnuancebs>

Children

- None.

Example

This is an example of streaming recognition element.

<streaming-recognition

single-utterance="true"

start-of-input="service-originated"

start-of-input-event="required"

language="en-US"

max-alternatives="1"

skip-unsupported-grammars="true"

skip-empty-results="true"

skip-no-input="true"

transcription-grammar="transcribe"

grammar-param-separator=";"

generate-output-audio="false"

stream-creation-timeout="0"

inter-result-timeout="0"

grpc-log-redirection="false"

grpc-log-verbosity=""

grpc-log-trace=""

max-recv-message-length="-1"

max-send-message-length="-1"

selector-channel="default"

selector-library="default"

session-timeout="0"

model-uri=""

model-type=""

speech-domain=""

auto-punctuate="false"

filter-profanity="false"

include-tokenization="false"

discard-speaker-adaptation="false"

suppress-call-recording="false"

mask-load-failures="false"

suppress-initial-capitalization="false"

allow-zero-base-lm-weight="false"

filter-wakeup-word="false"

end-stream-no-valid-hypotheses="false"

auth-validation-period="600"

auth-request-timeout="30"

/>

¶ 3.3 Results

This element specifies parameters of recognition results set in RECOGNITION-COMPLETE events.

Attributes

| Name | Unit | Description |

|---|---|---|

| format | String | Specifies the format of results to be returned to the client (use "standard" for NLSML and "json" for JSON). |

| indent | Integer | Specifies the indent to use while composing the results. |

| replace-dots | Boolean | Specifies whether to replace '.' with '_' in the parameter names, used while composing an XML content. The parameter is observed only if the format is set to standard. |

| replace-dashes | Boolean | Specifies whether to replace '-' with '_' in the parameter names, used while composing an XML content. The parameter is observed only if the format is set to standard. |

| confidence-format | String | Specifies the format of the confidence score to be returned. The parameter is observed only if the format is set to standard. Use one of:

|

| tag-format | String | Specifies the format of the instance element to be returned. The parameter is observed only if the format is set to standard. Use one of:

|

| tag-encoding | String | Specifies the encoding of the instance element to be returned. The parameter is observed only if the format is set to standard and tag-format is *semantics/json. Use one of:

|

| event-input-text | String | Specifies the input text to be filled in NLSML on a triggered activity. The parameter defaults to 'null', if not specified. |

Parent

<umsazurebot>

Children

- None.

Example

This is an example of results element.

<results

format="standard"

indent="0"

replace-dots="true"

confidence-format="auto"

tag-format="semantics/xml"

/>

¶ 3.4 Speech and DTMF Input Detector

This element specifies parameters of the speech and DTMF input detector.

Attributes

| Name | Unit | Description |

|---|---|---|

| vad-mode | Integer | Specifies an operating mode of VAD in the range of [0 ... 3]. Default is 1. |

| speech-start-timeout | Time interval [msec] | Specifies how long to wait in transition mode before triggering a start of speech input event. |

| speech-complete-timeout | Time interval [msec] | Specifies how long to wait in transition mode before triggering an end of speech input event. The complete timeout is used when there is an interim result available. |

| speech-incomplete-timeout | Time interval [msec] | Specifies how long to wait in transition mode before triggering an end of speech input event. The incomplete timeout is used as long as there is no interim result available. Afterwards, the complete timeout is used. |

| noinput-timeout | Time interval [msec] | Specifies how long to wait before triggering a no-input event. |

| input-timeout | Time interval [msec] | Specifies how long to wait for input to complete. |

| dtmf-interdigit-timeout | Time interval [msec] | Specifies a DTMF inter-digit timeout. |

| dtmf-term-timeout | Time interval [msec] | Specifies a DTMF input termination timeout. |

| dtmf-term-char | Character | Specifies a DTMF input termination character. |

| speech-leading-silence | Time interval [msec] | Specifies desired silence interval preceding spoken input. |

| speech-trailing-silence | Time interval [msec] | Specifies desired silence interval following spoken input. |

| speech-output-period | Time interval [msec] | Specifies an interval used to send speech frames to the recognizer. |

Parent

<umsnuancebs>

Children

- None.

Example

The example below defines a typical speech and DTMF input detector having the default parameters set.

<speech-dtmf-input-detector

vad-mode="2"

speech-start-timeout="300"

speech-complete-timeout="1000"

speech-incomplete-timeout="15000"

noinput-timeout="5000"

input-timeout="30000"

dtmf-interdigit-timeout="5000"

dtmf-term-timeout="10000"

dtmf-term-char=""

speech-leading-silence="300"

speech-trailing-silence="300"

speech-output-period="200"

/>

¶ 3.5 Utterance Manager

This element specifies parameters of the utterance manager.

Attributes

| Name | Unit | Description |

|---|---|---|

| save-waveforms | Boolean | Specifies whether to save waveforms or not. |

| purge-existing | Boolean | Specifies whether to delete existing records on start-up. |

| max-file-age | Time interval [min] | Specifies a time interval in minutes after expiration of which a waveform is deleted. Set 0 for infinite. |

| max-file-count | Integer | Specifies the max number of waveforms to store. If reached, the oldest waveform is deleted. Set 0 for infinite. |

| waveform-base-uri | String | Specifies the base URI used to compose an absolute waveform URI. |

| waveform-folder | Dir path | Specifies a folder the waveforms should be stored in. |

| file-prefix | String | Specifies a prefix used to compose the name of the file to be stored. Defaults to 'umsnuancebs-', if not specified. |

| use-logging-tag | Boolean | Specifies whether to use the MRCP header field Logging-Tag, if present, to compose the name of the file to be stored. |

Parent

<umsnuancebs>

Children

- None.

Example

The example below defines a typical utterance manager having the default parameters set.

<utterance-manager

save-waveforms="false"

purge-existing="false"

max-file-age="60"

max-file-count="100"

waveform-base-uri="http://localhost/utterances/"

waveform-folder=""

/>

¶ 3.6 RDR Manager

This element specifies parameters of the Recognition Details Record (RDR) manager.

Attributes

| Name | Unit | Description |

|---|---|---|

| save-records | Boolean | Specifies whether to save recognition details records or not. |

| purge-existing | Boolean | Specifies whether to delete existing records on start-up. |

| max-file-age | Time interval [min] | Specifies a time interval in minutes after expiration of which a record is deleted. Set 0 for infinite. |

| max-file-count | Integer | Specifies the max number of records to store. If reached, the oldest record is deleted. Set 0 for infinite. |

| record-folder | Dir path | Specifies a folder to store recognition details records in. Defaults to ${UniMRCPInstallDir}/var. |

| file-prefix | String | Specifies a prefix used to compose the name of the file to be stored. Defaults to 'umsnuancebs-', if not specified. |

| use-logging-tag | Boolean | Specifies whether to use the MRCP header field Logging-Tag, if present, to compose the name of the file to be stored. |

Parent

<umsnuancebs>

Children

- None.

Example

The example below defines a typical utterance manager having the default parameters set.

<rdr-manager

save-records="false"

purge-existing="false"

max-file-age="60"

max-file-count="100"

waveform-folder=""

/>

¶ 3.7 Monitoring Agent

This element specifies parameters of the monitoring agent.

Attributes

| Name | Unit | Description |

|---|---|---|

| refresh-period | Time interval [sec] | Specifies a time interval in seconds used to periodically refresh usage details. See <usage-refresh-handler>. |

Parent

<umsnuancebs>

Children

<usage-change-handler><usage-refresh-handler>

Example

The example below defines a monitoring agent with usage change and refresh handlers.

<monitoring-agent refresh-period="60">

<usage-change-handler>

<log-usage enable="true" priority="NOTICE"/>

</usage-change-handler>

<usage-refresh-handler>

<dump-channels enable="true" status-file="umsnuancebs-channels.status"/>

</usage-refresh-handler >

</monitoring-agent>

¶ 3.8 Usage Change Handler

This element specifies an event handler called on every usage change.

Attributes

- None.

Parent

<monitoring-agent>

Children

<log-usage><update-usage><dump-channels>

Example

This is an example of the usage change event handler.

<usage-change-handler>

<log-usage enable="true" priority="NOTICE"/>

<update-usage enable="false" status-file="umsnuancebs-usage.status"/>

<dump-channels enable="false" status-file="umsnuancebs-channels.status"/>

</usage-change-handler>

¶ 3.9 Usage Refresh Handler

This element specifies an event handler called periodically to update usage details.

Attributes

- None.

Parent

<monitoring-agent>

Children

<log-usage><update-usage><dump-channels>

Example

This is an example of the usage change event handler.

<usage-refresh-handler>

<log-usage enable="true" priority="NOTICE"/>

<update-usage enable="false" status-file="umsnuancebs-usage.status"/>

<dump-channels enable="false" status-file="umsnuancebs-channels.status"/>

</usage-refresh-handler>

¶ 3.10 License Server

This element specifies parameters used to connect to the license server.

Attributes

| Name | Unit | Description |

|---|---|---|

| enable | Boolean | Specifies whether the use of license server is enabled or not. If enabled, the license-file attribute is not honored. |

| server-address | String | Specifies the IP address or host name of the license server. |

| certificate-file | File path | Specifies the client certificate used to connect to the license server. File name may include patterns containing a '*' sign. If multiple files match the pattern, the most recent one gets used. |

| ca-file | File path | Specifies the certificate authority used to validate the license server. |

| channel-count | Integer | Specifies the number of channels to check out from the license server. If not specified or set to 0, either all available channels or a pool of channels will be checked based on the configuration of the license server. |

| http-proxy-address | String | Specifies the IP address or host name of the HTTP proxy server, if used. |

| http-proxy-port | Integer | Specifies the port number of the HTTP proxy server, if used. |

| security-level | Integer | Specifies the SSL security level, which defaults to 1. Applicable since OpenSSL 1.1.0. |

Parent

<umsnuancebs>

Children

- None.

Example

The example below defines a typical configuration which can be used to connect to a license server located, for example, at 10.0.0.1.

<license-server

enable="true"

server-address="10.0.0.1"

certificate-file="unilic_client_*.crt"

ca-file="unilic_ca.crt"

/>

¶ 4 Configuration Steps

This section outlines common configuration steps.

¶ 4.1 Using Default Configuration

The default configuration should be sufficient for the general use.

¶ 4.2 Starting Conversation

The conversation is associated to the MRCP session. The plugin maintains the conversation state implictly by sending the StartRequest to the DLGaaS API upon processing the first RECOGNIZE request in a new MRCP session.

¶ 4.3 Stoping Conversation

The conversation is associated to the MRCP session. The plugin implictly sends the StopRequest to the DLGaaS API when the associated MRCP session is being closed.

¶ 4.4 Kicking off Conversation

To kick off a new conversation, the first RECOGNIZE request shall have the vendor-specific parameter method set to Execute and optionally have the model-uri specified, for example, as follows.

builtin:speech/transcribe?method=Execute;model-uri=urn:nuance-mix:tag:model/A7315_C450210/mix.dialog

The RECOGNIZE request will complete without an input from the user and the response will be sent back to the user application in a RECOGNITION-COMPLETE event.

¶ 4.4 Interacting with User

To interact with the user and move forward through the conversation, subsequent RECOGNIZE requests shall be placed in a loop having the vendor-specific parameter method set to ExecuteStream.

builtin:speech/transcribe?method=ExecuteStream

¶ 4.5 Specifying Recognition Language

Recognition language can be specified by the client per MRCP session by means of the header field Speech-Language set in a SET-PARAMS or RECOGNIZE request. Otherwise, the parameter language set in the configuration file umsnuancebs.xml is used. The parameter defaults to en-US.

The recognition language can be set by the attribute xml:lang specified in the SRGS grammar.

<?xml version="1.0" encoding="UTF-8"?>

<grammar mode="voice" root="transcribe" version="1.0"

xml:lang="es-ES"

xmlns="http://www.w3.org/2001/06/grammar">

<meta name="scope" content="builtin"/>

<rule id="transcribe"><one-of/></rule>

</grammar>

The recognition language can also be set by the optional parameter language passed to a built-in grammar.

builtin:speech/transcribe?language=es-ES

¶ 4.6 Specifying Sampling Rate

Sampling rate is determined based on the SDP negotiation. Refer to the configuration guide of the UniMRCP server on how to specify supported encodings and sampling rates to be used in communication between the client and server.

The native sampling rate with the linear16 audio encoding is used in gRPC streaming to the service endpoint.

¶ 4.7 Specifying Speech Input Parameters

While the default parameters specified for the speech input detector are sufficient for the general use, various parameters can be adjusted to better suit a particular requirement.

- speech-start-timeout

This parameter is used to trigger a start of speech input. The shorter is the timeout, the sooner a START-OF-INPUT event is delivered to the client. However, a short timeout may also lead to a false positive.

- speech-complete-timeout

This parameter is used to trigger an end of speech input. The shorter is the timeout, the shorter is the response time. However, a short timeout may also lead to a false positive.

Note that both events, an expiration of the speech complete timeout and a final response delivered from the service endpoint, are monitored to trigger an end of speech input, on whichever comes first basis. In order to rely solely on an event delivered from the speech service, the parameter speech-complete-timeout needs to be set to a higher value.

- vad-mode

This parameter is used to specify an operating mode of the Voice Activity Detector (VAD) within an integer range of [0 … 3]. A higher mode is more aggressive and, as a result, is more restrictive in reporting speech. The parameter can be overridden per MRCP session by setting the header field Sensitivity-Level in a SET-PARAMS or RECOGNIZE request. The following table shows how the Sensitivity-Level is mapped to the vad-mode.

| Sensitivity-Level | Vad-Mode |

|---|---|

| [0.00 ... 0.25) | 0 |

| [0.25 … 0.50) | 1 |

| [0.50 ... 0.75) | 2 |

| [0.75 ... 1.00] | 3 |

¶ 4.8 Specifying DTMF Input Parameters

While the default parameters specified for the DTMF input detector are sufficient for the general use, various parameters can be adjusted to better suit a particular requirement.

- dtmf-interdigit-timeout

This parameter is used to set an inter-digit timeout on DTMF input. The parameter can be overridden per MRCP session by setting the header field DTMF-Interdigit-Timeout in a SET-PARAMS or RECOGNIZE request.

- dtmf-term-timeout

This parameter is used to set a termination timeout on DTMF input and is in effect when dtmf-term-char is set and there is a match for an input grammar. The parameter can be overridden per MRCP session by setting the header field DTMF-Term-Timeout in a SET-PARAMS or RECOGNIZE request.

- dtmf-term-char

This parameter is used to set a character terminating DTMF input. The parameter can be overridden per MRCP session by setting the header field DTMF-Term-Char in a SET-PARAMS or RECOGNIZE request.

¶ 4.6 Specifying No-Input and Recognition Timeouts

- noinput-timeout

This parameter is used to trigger a no-input event. The parameter can be overridden per MRCP session by setting the header field No-Input-Timeout in a SET-PARAMS or RECOGNIZE request.

- input-timeout

This parameter is used to limit input (recognition) time. The parameter can be overridden per MRCP session by setting the header field Recognition-Timeout in a SET-PARAMS or RECOGNIZE request.

¶ 4.9 Specifying Speech Recognition Mode

Single Utterance Mode

By default, if the configuration parameter single-utterance is set to true, recognition is performed in the single utterance mode and is terminated upon an expiration of the speech complete timeout or a final response delivered from the service endpoint.

Continuous Recognition Mode

In the continuous speech recognition mode, when the configuration parameter single-utterance is set to false, recognition is terminated upon an expiration of the speech complete timeout, which is recommended to be set in the range of 1500 msec to 3000 msec. The service endpoint may return multiple results (sub utterances), which are concatenated and sent back to the MRCP client in a single RECOGNITION-COMPLETE event.

The parameter single-utterance can be overridden per MRCP session by setting the header field Vendor-Specific-Parameters in a SET-PARAMS or RECOGNIZE request, where the parameter name is single-utterance and acceptable values are true and false.

¶ 4.10 Specifying Vendor Specific Parameters

The following parameters can optionally be specified by the MRCP client in SET-PARAMS, DEFINE-GRAMMAR and RECOGNIZE requests via the MRCP header field Vendor-Specific-Parameters.

| Name | Unit | Description |

|---|---|---|

| start-of-input | String | Specifies the source of start of input event sent to the client (use "service-originated" for an event originated based on a first-received interim result and "internal" for an event determined by plugin). |

| alternatives-below-threshold | Boolean | Specifies whether to return speech recognition result alternatives with the confidence score below the confidence threshold. |

| speech-start-timeout | Time interval [msec] | Specifies how long to wait in transition mode before triggering a start of speech input event. |

| skip-empty-results | Boolean | Specifies whether to implicitly initiate a new gRPC request if the current one completes with an empty result. |

| interim-result-timeout | Time interval [msec] | Specifies a timeout between interim results containing transcribed speech. If the timeout is elapsed, input is considered complete. |

| logging-tag | String | Specifies the logging tag. |

| tag-format | String | Specifies the format of the instance element to be returned. |

| service-uri | String | Specifies the service endpoint uri. |

| topic | String | Specifies the topic field in RecognitionParameters. |

| speech-domain | String | Specifies the speech_domain field in RecognitionParameters. |

| formatting-scheme | String | Specifies the formatting scheme field in RecognitionParameters. |

| auto-punctuate | Boolean | Specifies whether to enable automatic punctuation. |

| filter-profanity | Boolean | Specifies whether to filter profanities in recognition result. |

| include-tokenization | Boolean | Specifies whether to include tokenized recognition result. |

| discard-speaker-adaptation | Boolean | Specifies whether to discard updated speaker data, if speaker profiles are used. By default, data is stored. |

| suppress-call-recording | Boolean | Specifies whether to redact transcription results in the call logs and disable audio capture. |

| mask-load-failures | Boolean | Specifies whether errors loading external resources shall not terminate recognition. |

| suppress-initial-capitalization | Boolean | Specifies whether to suppress automatic capitalization of the first word in a sentence. |

| allow-zero-base-lm-weight | Boolean | Specifies whether custom resources (DLMs, wordsets, and others) can use the entire weight space. |

| filter-wakeup-word | Boolean | Specifies whether to remove the wakeup word from the final result. |

| end-stream-no-valid-hypotheses | Boolean | Determines whether the dialog application or the client application handles the dialog flow when ASRaaS does not return a valid hypothesis. Can be overridden by client. |

| selector-channel | String | Specifies the selector channel used in the Start request. Can be overridden by client. |

| selector-library | String | Specifies the selector library used in the Start request. Can be overridden by client. |

| session-timeout | Time interval [sec] | Specifies the session timeout used in the Start request. Can be overridden by client. |

| model-uri | String | Specifies the model URI used in the Start request. Can be overridden by client. |

| model-type | String | Specifies the model type used in the Start request. Can be overridden by client. |

| recognition-resources-json | String | Specifies the resources field set in RecognitionInitMessage. The value of this parameter must transparently be specified in JSON. |

| start-request-payload-json | String | Specifies the payload of the Start request. The value of this parameter must transparently be specified in JSON. |

| execute-request-payload-json | String | Specifies the payload of the Execute request. The value of this parameter must transparently be specified in JSON. |

| client-data.* | String | Specifies transparent name/value parameters set in the client_data field in RecognitionInitMessage. The name must start with a prefix "client-data.". |

| user-id | String | Specifies the user_id field set in RecognitionInitMessage. |

All the vendor-specific parameters can also be specified at the grammar-level via a built-in or SRGS XML grammar.

The following example demonstrates the use of a built-in grammar with the vendor-specific parameters alternatives-below-threshold and speech-start-timeout set to true and 100 correspondingly.

builtin:speech/transcribe?alternatives-below-threshold=true;speech-start-timeout=100

The following example demonstrates the use of an SRGS XML grammar with the vendor-specific parameters alternatives-below-threshold and speech-start-timeout set to true and 100 correspondingly.

<grammar mode="voice" root="transcribe" version="1.0" xml:lang="en-US" xmlns="http://www.w3.org/2001/06/grammar">

<meta name="scope" content="builtin"/>

<meta name="alternatives-below-threshold" content="true"/>

<meta name="speech-start-timeout" content="100"/>

<rule id="transcribe">

<one-of ><item>blank</item></one-of>

</rule>

</grammar>

¶ 4.11 Specifying Recognition Resources

The resources field in RecognitionInitMessage can transparently be specified in JSON by means of the vendor-specific parameter recognition-resources-json.

¶ External Reference

The following example demonstrates the use of an external reference passed to a built-in grammar.

builtin:speech/transcribe?recognition-resources-json=[{"external_reference": {"type": "DOMAIN_LM", "uri": "urn:nuance-mix:tag:model/names-places/mix.asr?=language=eng-USA"}}]

¶ Inline Wordset

The following example demonstrates the use of an inline wordset passed to a built-in grammar.

builtin:speech/transcribe?recognition-resources-json=[{"inline_wordset": "{\"PLACES\":[{\"literal\":\"La Jolla\",\"spoken\":[\"la hoya\",\"la jolla\"]},{\"literal\":\"Llanfairpwllgwyngyll\",\"spoken\":[\"lan vire pool guin gill\"]},{\"literal\":\"Abington Pigotts\"},{\"literal\":\"Steeple Morden\"},{\"literal\":\"Hoyland Common\"},{\"literal\":\"Cogenhoe\",\"spoken\":[\"cook no\"]},{\"literal\":\"Fordoun\",\"spoken\":[\"forden\",\"fordoun\"]},{\"literal\":\"Llangollen\",\"spoken\":[\"lan goth lin\",\"lan gollen\"]},{\"literal\":\"Auchenblae\"}]}", "weight_value": "0.2"}]

¶ Builtin

The following example demonstrates the use of a builtin resource in the data pack passed to a built-in grammar.

builtin:speech/transcribe?recognition-resources-json=[{"builtin": "CALENDARX", "weight_value": "0.2"}, {"builtin": "DISTANCE", "weight_value": "0.2"}]

¶ Wakeup Word

The following example demonstrates the use of wakeup words passed to a built-in grammar.

builtin:speech/transcribe?recognition-resources-json=[{"wakeup_word ": {"words": ["Hi Dragon", "Hey Dragon", "Yo Dragon"]}}]

¶ 4.12 Specifying Client Data

The following example demonstrates the use of client data passed to a built-in grammar.

builtin:speech/transcribe?client-data.call-id=22abe481329346518d11a3b2b599fe00;client-data.called-number=2876001223

As a result, the call-id and called-number parameters will be set in the client_data field in RecognitionInitMessage.

¶ 4.13 Specifying User ID

The following example demonstrates the use of user id passed to a built-in grammar.

builtin:speech/transcribe?user-id=126673

As a result, the user-d parameter will be set in the user_id field in RecognitionInitMessage.

¶ 4.14 Maintaining Utterances

Saving of utterances is not required for regular operation and is disabled by default. However, enabling this functionality allows to save utterances sent to the service endpoint and later listen to them offline.

The relevant settings can be specified via the element utterance-manager.

- save-waveforms

Utterances can optionally be recorded and stored if the configuration parameter save-waveforms is set to true. The parameter can be overridden per MRCP session by setting the header field Save-Waveforms in a SET-PARAMS or RECOGNIZE request.

- purge-existing

This parameter specifies whether to delete existing waveforms on start-up.

- max-file-age

This parameter specifies a time interval in minutes after expiration of which a waveform is deleted. If set to 0, there is no expiration time specified.

- max-file-count

This parameter specifies the maximum number of waveforms to store. If the specified number is reached, the oldest waveform is deleted. If set to 0, there is no limit specified.

- waveform-base-uri

This parameter specifies the base URI used to compose an absolute waveform URI returned in the header field Waveform-Uri in response to a RECOGNIZE request.

- waveform-folder

This parameter specifies a path to the directory used to store waveforms in. The directory defaults to ${UniMRCPInstallDir}/var.

¶ 4.15 Maintaining Recognition Details Records

Producing of recognition details records (RDR) is not required for regular operation and is disabled by default. However, enabling this functionality allows to store details of each recognition attempt in a separate file and analyze them later offline. The RDRs ate stored in the JSON format.

The relevant settings can be specified via the element rdr-manager.

- save-records

This parameter specifies whether to save recognition details records or not.

- purge-existing

This parameter specifies whether to delete existing records on start-up.

- max-file-age

This parameter specifies a time interval in minutes after expiration of which a record is deleted. If set to 0, there is no expiration time specified.

- max-file-count

This parameter specifies the maximum number of records to store. If the specified number is reached, the oldest record is deleted. If set to 0, there is no limit specified.

- record-folder

This parameter specifies a path to the directory used to store records in. The directory defaults to ${UniMRCPInstallDir}/var.

¶ 5 Recognition Grammars and Results

¶ 5.1 Using Built-in Speech Contexts

¶ 5.1 Using Built-in Speech Transcription

For generic speech transcription, having no speech contexts defined, a pre-set identifier transcribe must be used by the MRCP client in a RECOGNIZE request as follows:

builtin:speech/transcribe

The name of the identifier transcribe can be changed from the configuration file umsnuancebs.xml.

¶ 5.2 Using Built-in DTMF Grammars

Pre-set built-in DTMF grammars can be referenced by the MRCP client in a RECOGNIZE request as follows:

builtin:dtmf/$id

Where $id is a unique string identifier of the built-in DTMF grammar.

Note that only a DTMF grammar identifier digits is currently supported.

Built-in DTMF digits can also be referenced by metadata in SRGS XML grammar. The following example is equivalent to the built-in grammar above.

<grammar mode="dtmf" root="digits" version="1.0"

xml:lang="en-US"

xmlns="http://www.w3.org/2001/06/grammar">

<meta name="scope" content="builtin"/>

<rule id="digits"><one-of/></rule>

</grammar>

Where the root rule name identifies a built-in DTMF grammar.

Results received from the Azure service are transformed to a certain data structure and sent to the MRCP client in a RECOGNITION-COMPLETE event. The way results are composed can be adjusted via the <results> element in the configuration file umsazurebot.xml.

NLSML Format

If the format attribute is set to standard, which is the default setting, then the header filed Content-Type is set to application/x-nlsml and the body of a RECOGNITION-COMPLETE event is set to an NSLML result composed as follows.

input

The <input> element in an NLSML result is set to the transcribed text.

instance

By default, the <instance> element in the NLSML result is composed based on an XML representation of the returned intent. This behavior can be adjusted via the tag-format attribute, which accepts the following values.

- semantics/xml

The default setting. The intent is represented in XML.

- semantics/json

The intent is represented in JSON.

- swi-semantics/xml

The intent is set in an inner <SWI_meaning> element being represented in XML.

- swi-semantics/json

The intent is set in an inner <SWI_meaning> element being represented in JSON.

JSON Format

If the format attribute is set to json, then the header filed Content-Type is set to application/json and the body of a RECOGNITION-COMPLETE event is set to a JSON representation of the intent.

The format attribute can be specified by the MRCP client per individual MRCP RECOGNIZE request as a query input attribute to the built-in speech grammar. For example:

builtin:speech/transcribe?format=json

The format attribute can also be specified in SRGS XML grammar. For example:

<grammar mode="voice" root="transcribe" version="1.0"

xml:lang="en-US"

xmlns="http://www.w3.org/2001/06/grammar">

<meta name="scope" content="builtin"/>

<meta name="format" content="json"/>

<rule id="main"><one-of/></rule>

</grammar>

¶ 6 Monitoring Usage Details

The number of in-use and total licensed channels can be monitored in several alternate ways. There is a set of actions which can take place on certain events. The behavior is configurable via the element monitoring-agent, which contains two event handlers: usage-change-handler and usage-refresh-handler.

While the usage-change-handler is invoked on every acquisition and release of a licensed channel, the usage-refresh-handler is invoked periodically on expiration of a timeout specified by the attribute refresh-period.

The following actions can be specified for either of the two handlers.

¶ 6.1 Log Usage

The action log-usage logs the following data in the order specified.

-

The number of currently in-use channels.

-

The maximum number of channels used concurrently.

-

The total number of licensed channels.

The following is a sample log statement, indicating 0 in-use, 0 max-used and 2 total channels.

[NOTICE] NUANCEBS Usage: 0/0/2

¶ 6.2 Update Usage

The action update-usage writes the following data to a status file umsnuancebs-usage.status, located by default in the directory ${UniMRCPInstallDir}/var/status.

- The number of currently in-use channels.

- The maximum number of channels used concurrently.

- The total number of licensed channels.

- The current status of the license permit.

- The license server alarm. Set to on, if the license server is not available for more than one hour; otherwise, set to off. This parameter is maintained only if the license server is used.

The following is a sample content of the status file.

in-use channels: 0

max used channels: 0

total channels: 2

license permit: true

licserver alarm: off

¶ 6.3 Dump Channels

The action dump-channels writes the identifiers of in-use channels to a status file umsnuancebs-channels.status, located by default in the directory ${UniMRCPInstallDir}/var/status.

¶ 7 Usage Examples

¶ 7.1 Conversation Flow

This example demonstrates how to kikck of a new conversation and then interact with the acller in a loop.

C->S:

MRCP/2.0 394 RECOGNIZE 1

Channel-Identifier: 06ab6c8dd9555747@speechrecog

Content-Type: text/uri-list

Cancel-If-Queue: false

Start-Input-Timers: true

No-Input-Timeout: 10000

Speech-Complete-Timeout: 1000

Speech-Incomplete-Timeout: 15000

Content-Length: 126

builtin:speech/transcribe?method=Execute;model-uri=urn:nuance-mix:tag:model/A7315_C450210/mix.dialog;tag-format=semantics/json

S->C:

MRCP/2.0 83 1 200 IN-PROGRESS

Channel-Identifier: 06ab6c8dd9555747@speechrecog

S->C:

MRCP/2.0 115 START-OF-INPUT 1 IN-PROGRESS

Channel-Identifier: 06ab6c8dd9555747@speechrecog

Input-Type: speech

S->C:

MRCP/2.0 2367 RECOGNITION-COMPLETE 1 COMPLETE

Channel-Identifier: 06ab6c8dd9555747@speechrecog

Completion-Cause: 000 success

Content-Type: application/x-nlsml

Content-Length: 2180

<?xml version="1.0"?><result><interpretation grammar="builtin:speech/transcribe" confidence="0"><instance>{"response":{"payload":{"messages":[{"nlg":[{"text":"Windborne Airlines. Thanks for flying with us!"}],"visual":[{"text":"Windborne Airlines. Thanks for flying with us!"}],"view":{},"language":"en-US","ttsParameters":{"voice":{"language":"en-US"}},"channel":"default"}],"qaAction":{"message":{"nlg":[{"text":"How can I help today? Or say What are my options?"}],"language":"en-US","ttsParameters":{"voice":{"language":"en-US"}},"chann

el":"default"},"view":{},"recognitionSettings":{"dtmfMappings":[{"id":"eGlobalCommands","value":"escalate","dtmfKey":"0"}],"collectionSettings":{"timeout":"7000ms","completeTimeout":"0ms","incompleteTimeout":"500ms","maxSpeechTimeout":"12000ms"},"speechSettings":{"sensitivity":"0.5","bargeInType":"speech","speedVsAccuracy":"0.5"},"dtmfSettings":{"interDigitTimeout":"3000ms","termTimeout":"2000ms","termChar":"#"}},"orchestrationResourceReference":{"recognitionResources":[{"externalReference":{"type":"DOMAIN_LM","uri":"urn:nuance-mix:tag:model/A7315_C150290/mix.asr?=language=eng-USA"}}],"interpretationResourc

es":[{"externalReference":{"type":"SEMANTIC_MODEL","uri":"urn:nuance-mix:tag:model/A7315_C150290/mix.nlu?=language=eng-USA"}}]}},"channel":"default"}},"asrResult":{}}</instance><input mode="speech">null</input></interpretation></result>

C->S:

MRCP/2.0 371 RECOGNIZE 4

Channel-Identifier: 06ab6c8dd9555747@speechrecog

Content-Type: text/uri-list

Cancel-If-Queue: false

Start-Input-Timers: false

Speech-Incomplete-Timeout: 15000

No-Input-Timeout: 10000

Speech-Complete-Timeout: 1000

Content-Length: 93

builtin:speech/transcribe?method=ExecuteStream;tag-format=semantics/json

builtin:dtmf/digits

S->C:

MRCP/2.0 83 4 200 IN-PROGRESS

Channel-Identifier: 06ab6c8dd9555747@speechrecog

C->S:

MRCP/2.0 86 START-INPUT-TIMERS 5

Channel-Identifier: 06ab6c8dd9555747@speechrecog

S->C:

MRCP/2.0 80 5 200 COMPLETE

Channel-Identifier: 06ab6c8dd9555747@speechrecog

S->C:

MRCP/2.0 115 START-OF-INPUT 4 IN-PROGRESS

Channel-Identifier: 06ab6c8dd9555747@speechrecog

Input-Type: speech

S->C:

MRCP/2.0 3339 RECOGNITION-COMPLETE 4 COMPLETE

Channel-Identifier: 06ab6c8dd9555747@speechrecog

Completion-Cause: 000 success

Content-Type: application/x-nlsml

Content-Length: 3152

<?xml version="1.0"?><result><interpretation grammar="builtin:speech/transcribe" confidence="0.755"><instance>{"response":{"payload":{"endAction":{"data":{},"id":"ma1025_EndCall_EA"},"channel":"default"}},"asrResult":{"absEndMs":2800,"utteranceInfo":{"durationMs":2800,"dsp":{"snrEstimateDb":27,"level":9217,"numChannels":1,"initialSilenceMs":220,"initialEnergy":-64.6157,"finalEnergy":-60.252,"meanEnergy":120.419}},"hypotheses":[{"confidence":0.755,"averageConfidence":0.965,"formattedText":"I would like to make a reservation","minimallyFormattedText":"I would like t

o make a reservation","words":[{"text":"I","confidence":0.972,"startMs":220,"endMs":380},{"text":"would","confidence":0.984,"startMs":380,"endMs":500},{"text":"like","confidence":0.985,"startMs":500,"endMs":720},{"text":"to","confidence":0.979,"startMs":720,"endMs":820},{"text":"make","confidence":0.976,"startMs":820,"endMs":1060},{"text":"a","confidence":0.819,"startMs":1060,"endMs":1160},{"text":"reservation","confidence":0.969,"startMs":1160,"endMs":1700}],"encryptedTokenization":"Cdr7W4UJ/jpyk3kbQNqNEAfowmP9xl/+bm88BpU6MlPOGnFWkFVLESwGhnrBey1xI5qzNFLkQ/bgl2nP2rCU8wNEHKLgGyRTtwMqLhtFJINGWr0F1P+ComVnXReEthIU/GO/rSN2k2WjwQmslzLdVMPPC8E

RqoFNO5bpqd7A09905tiCg/79bevF/HiRI4EMt27XvkRVGtpr3CePSE7BTdlPr50yWfEjfzyT7erJL4Fl6sOcVAwO5Af61Hj61+E3mRVFeYJsjmqzNLtRzdJ1i0pYOXaa+OPSscqCWwxfoLZ5tGHtY0DdoyR0ByJomepmQF/BCckmTblMHhozXaFDwQ==F0tpGvJcZzhQ6ML96woXMbNuQQfAXkhAUQW/DNFuN7pLr2Z3fwPC3c8sj6cOsYy3AGeD4u5h5NU2XxSlf3JynxOmLG8tq2M2FpqIPUTyZDi8ORLB9SO4EbwzdQMqP07XGiukgCc08p/sO/+rHctSXkLG//SxVt8nTrIiIQmk5s9wm0A1F+s+4aAriG1/IZzEYqUghfHXNejAY3i0SAyHxWUr8j38Yz8TgtvMysyO6m3xlEGN+w+qL7B3/hEgxuPsCtFokFDyuk21LtJhd6CiCIj69nyHcCW61oZo7jpEJgwxSR4FBkGNNmTas35e5JmMQEAjGt4oW2X6lWW9rZJ+2qpC73FICTSauE01uBdcuXUqDUKQ4cYJekbYyFoTe8JzLJLapJXk75ZAx4Ak7gvfLoY475bw2hAJcEfNy3fcKpuxrj2Exv7eiMsCZVVextQEDxXLfj+CWRlwhS7gyKxdyAzkEh++WRd/31OiMf7shXfrt1vPGGNcUtklKkMN07fLWybmHT6C1Nf9rrQt+oYjR1pflbFTSI834wp+k5CmHDSI9VkjC7mPg+EgfpM0n0TsXEcEaFGe6d/PVhiwFW4LTRJuEuTJgLMssc+HEQPthWab8eP9czCeDhYjusJ8hhYgKpZI5UiGc+GMI2T7hJnoVCoIHUBWlPY8OeF0/BeCv08ZZGoBPhR2Aig30jwVzOOpzPgCdD9DGq/RC1zTX4n9BRHuWCZwf67IjcR7TJm0EoKkym+2XlMpfqyrrKYGke369VPReQat4bKuaqB+9GS8hpEfjjDopFY2puq/irWBmmw="}],"dataPack

":{"language":"eng-USA","topic":"GEN","version":"4.10.1","id":"GMT20221214200856"}}}</instance><input mode="speech">I would like to make a reservation</input></interpretation></result>

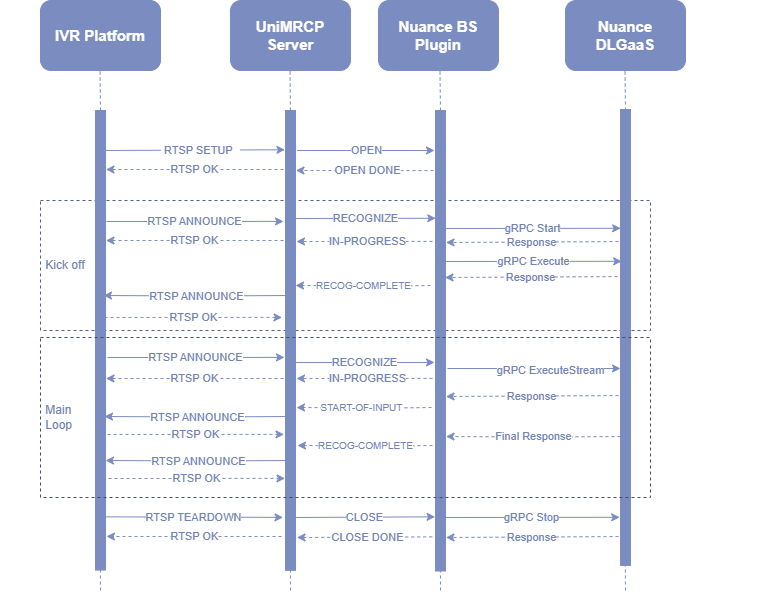

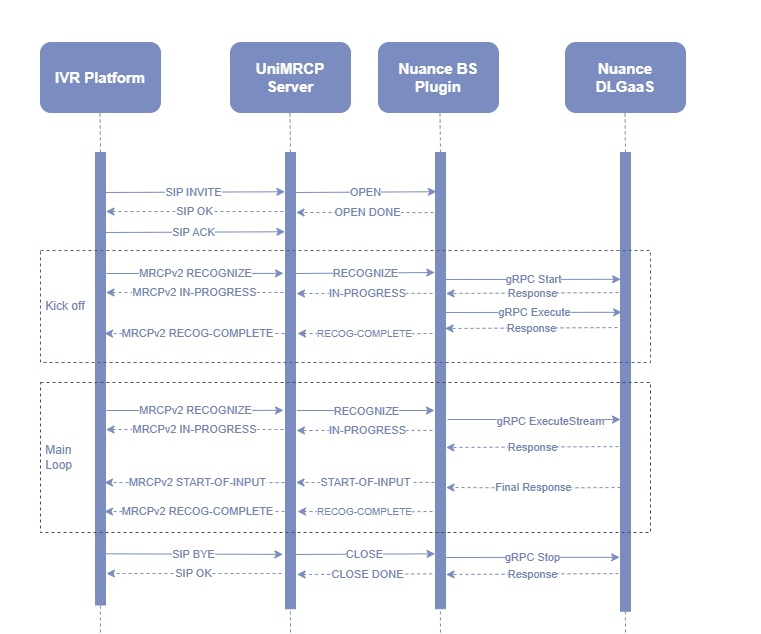

¶ 8 Sequence Diagrams

The following sequence diagrams outline common interactions between all the main components involved in a typical recognition session performed over MRCPv1 and MRCPv2 respectively.

¶ 8.1 MRCPv1

¶ 8.2 MRCPv2

¶ 9 Security Considerations

¶ 9.1 Network Connection

All the data is carried over an HTTP/2 connection via the gRPC streaming.

RPM Installation Manual Red Hat / CentOS

RPM Installation Manual Red Hat / CentOS Deb Installation Manual Debian / Ubuntu

Deb Installation Manual Debian / Ubuntu